Case Pattern: Predictive Maintenance AI for a Manufacturing Plant

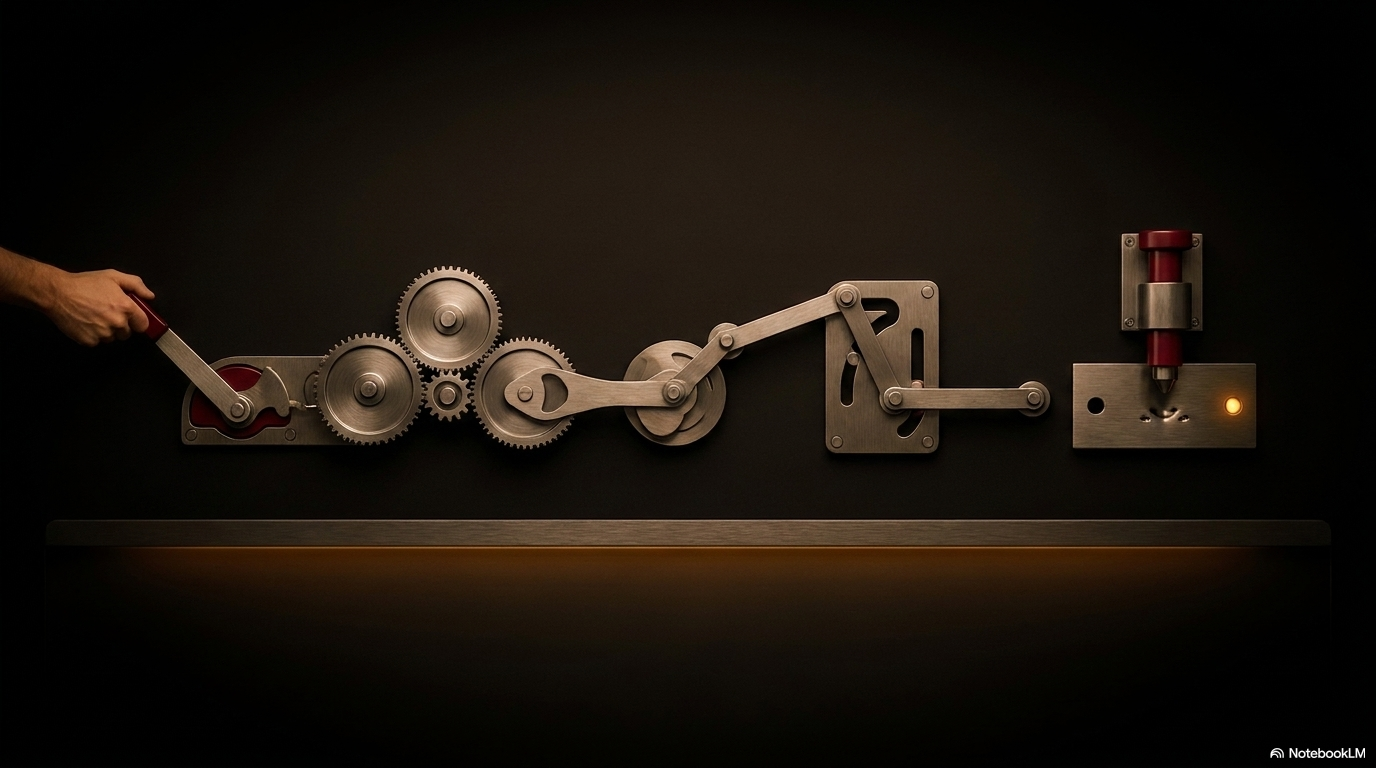

Architecture and lessons from deploying AI-driven predictive maintenance across 200+ machines in a continuous manufacturing operation.

A continuous manufacturing plant running 24/7 operations experienced an average of 14 unplanned equipment failures per month, each costing $50,000-$200,000 in lost production, emergency repairs, and downstream schedule disruption. The plant deployed an AI-driven predictive maintenance system to detect failures before they occur and schedule maintenance during planned downtime windows.

The Architecture

The system collects sensor data from 230 machines, processes it through anomaly detection models, and generates maintenance recommendations.

Data collection layer - Each machine is instrumented with 5-15 sensors measuring vibration, temperature, pressure, current draw, and acoustic emissions. Sensors report readings every 10 seconds to an IoT gateway. The gateway forwards data to a time-series database (Amazon Timestream) via IoT Core. Total data volume is approximately 2 billion data points per day.

Feature engineering - Raw sensor readings are transformed into features: rolling averages (1-hour, 24-hour, 7-day), rate-of-change indicators, frequency domain features from vibration data (FFT analysis), and cross-sensor correlation metrics. Feature computation runs as a streaming process, producing updated feature vectors every 5 minutes per machine.

Anomaly detection - A machine-specific model for each equipment type compares current feature vectors against the learned normal operating profile. The model outputs an anomaly score (0-100) and identifies which sensors are contributing most to the anomaly. Models were trained on 6 months of historical data per machine type, with known failure events labeled.

Recommendation engine - When an anomaly score exceeds the alert threshold, an LLM analyzes the anomaly pattern (which sensors, what direction, how fast the change) and generates a maintenance recommendation: likely failure mode, estimated time to failure, recommended action, and urgency level. The recommendation is cross-referenced against the machine’s maintenance history and spare parts inventory.

Key Lessons

Sensor data quality was the first six months of work. Before building any models, the team spent six months fixing sensor calibration issues, replacing faulty sensors, standardizing data formats, and building data quality monitoring. Models trained on bad sensor data produced useless predictions regardless of algorithm sophistication.

Machine-specific baselines were essential. A one-size-fits-all anomaly threshold produced too many false alarms on machines that naturally run hot and missed genuine anomalies on machines that normally run cool. Each machine type needed its own operating profile, and even individual machines of the same type had meaningful baseline differences.

False alarms nearly killed the project. The initial deployment produced 40+ alerts per day, of which fewer than 5% indicated actual problems. Maintenance teams began ignoring alerts entirely. Tuning the anomaly threshold to reduce false alarms to under 3 per day (with 70% true positive rate) restored trust and adoption.

Time-to-failure estimation was harder than detection. The system reliably detected that something was wrong but struggled to predict when failure would occur. A bearing showing degradation might fail in 2 days or 2 weeks depending on load conditions. The team learned to frame recommendations as urgency categories (immediate, this week, next scheduled downtime) rather than specific dates.

Results

Unplanned failures decreased from 14 to 4 per month within the first year. Maintenance labor efficiency improved by 25% through better scheduling. Spare parts inventory costs decreased by 15% through better demand forecasting. Total annual savings exceeded $3 million against a system cost of $800,000.